Freedom of expression & content moderation on social media: the promises and challenges of experimental accountability

This blog post is part of a collaborative education project on social media and platform governance undertaken by Prof. Nicolo Zingales (Fundação Getulio Vargas), Thales Bertaglia (Studio Europa/Institute of Data Science, UM) and Dr. Catalina Goanta (UM).

Authors: Chloé Rozindo Sandoli; Hassan Almawy Ramalho de Araujo; Karin Matilda Matsdotter Ellman; Wanda Kristina Mellberg; Laura Carvalho Pessanha Oliboni; Leonardo Neuenschwander Chammas do Nascimento; Larissa Tito Pereira Soares, Nicolo Zingales.

Every social media platform has its own set of community guidelines detailing what platform users can say and do, and the consequences of possible violations. But how do these guidelines fare from a freedom of expression standpoint? To understand the impact of content moderation on freedom of expression in different social media platforms, the Cyberlaw clinic at Fundação Getúlio Vargas law school in Rio de Janeiro produced between February and June 2021 a dataset of approximately 900 experimental observations regarding the enforcement of moderation rules in Instagram, Twitter and TikTok. With this blogpost, we want to share our highlights from these observations, describing the methodology and pointing to some of the promises and challenges that have been identified in the process.

1. Incorporation of FoE standards into social media platform rules: work in progress?

As an initial step of this project, participants analyzed the guidelines in each platform from the perspective of human rights law. In particular, the clinic started from the assumption that, since these platforms can effectively have as much impact on freedom of expression as States do (if not more), the principles of legality, legitimacy and proportionality should apply to the rules they set. In other words, any restrictions of freedom of expression must be grounded on a law (a guideline rule) which is clear and accessible to everyone; it must pursue a legitimate purpose (recognized in international law); and it must be necessary and proportionate to the achievement of that purpose.

To verify that, each rule of the guidelines was assessed in accordance with the general value that it aimed to protect (for instance, safety or privacy), and the category of activities that it regulated (for instance, “violence”, “hateful conduct”, or “impersonation”). Then, the rules linked to the international freedom of expression standards that could have provided normative grounding for each restriction, ranging from the International Covenant on Civil and Political Rights (ICCPR) to the Council of Europe conventions and several UN reports. It was relatively easy to trace back the concerns addressed in those guidelines to several legal instruments, including the categories of legitimate restrictions identified by article 19 (3) ICCPR: respect for the rights or reputations of others; the protection of national security; the protection of public order; the protection of public health; or the protection of public morals. However, several issues of legality and proportionality of the rules and their enforcement were raised, to be further explored as part of the empirical research (see below, sections 2 and 3).

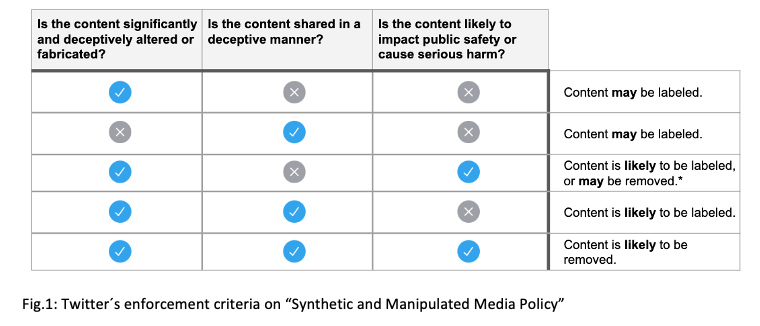

In particular, those issues were divided into four different categories: norm legality, regarding the clarity and predictability of the rules; enforcement legality, regarding the clarity of the consequences of a possible violation; norm proportionality, regarding how proportionate those rules are to the achievement of a purpose that is recognized as legitimate under international law; and, finally, enforcement proportionality, or how proportionate the sanctions were to those violations. Overall, the biggest and most recurrent problem that was identified was the use of open-ended and subjective concepts, not only in the definition of prohibited activities but also with regard to their enforcement (by using terms such as ‘generally’, and ‘based on a number of factors’, without further clarification). In addition to being incompatible with the principle of legality, this tendency made it difficult to assess the respect of the principle of proportionality. Furthermore, on some occasions (see, for instance, Tiktok´s platform security policy and Twitter´s “illegal or regulated goods or services”) the platforms did not even specify the sanctions applicable to prohibited conduct. On the other hand, a best practice was identified with regard to enforcement legality in Twitter´s synthetic or manipulated media, which could be easily extended to other areas and platforms: although not revealing all the factors that are taken into account in such moderation decisions, the guidelines provide an explanation of the questions that guide the enforcement of guidelines by content moderators, including the specific action they are likely to take (see Fig. 1 below)

Across the three platforms, one rule in particular was found to give rise to proportionality issues: the permanent or indefinite suspension of one´s account as a sanction for certain kinds of violations (for example, in the case of a “violent threat”). Although account suspension is clearly one of the essential tools for effective content moderation, the indefinite or permanent nature of such a sanction appears to be in conflict with the principle of proportionality: this view finds confirmation in a ruling by the North Carolina Supreme Court about a statute that made a felony for a registered sex offender to gain access to a number of websites, including social media websites like Facebook and Twitter. The Court held that, by categorically prohibiting sex offenders from using those websites, North Carolina had denied access to what for many are the principal sources for knowing current events, checking ads for employment, speaking and listening in the modern public square, and otherwise exploring the vast realms of human thought and knowledge.

The permissibility of permanent or indefinite account suspension was also a core issue in the recent decision of the Oversight Board over Facebook´s suspension of the account of former US president Donald Trump: there, the Board required Facebook to reconsider this “arbitrary penalty” and decide an “appropriate penalty”, based on the gravity of the violation and the prospect of future harm, in accordance with the Rabat Plan of Action on the prohibition of advocacy of national, racial or religious hatred. Yet it remains to be seen how Facebook and other platforms can practically incorporate into their guidelines the granularity of the Rabat Plan of Action, which lists criteria such as the context of the speech, the status and intention of the speaker, the content and form of the speech, its extent and reach, and the imminence of harm. Perhaps, the approach taken by Twitter in Fig.1 above may set social media platforms on the right path.

To be fair, it should be recognized that content moderation is complex, and that international human rights law only provides high-level guidance. In part, this may explain the lack of detail in the platforms´ content governance rules. However, it is questionable the extent to which this argument constitutes a valid justification for the opacity of content moderation: the protection of fundamental rights and the rule of law should not solely depend on international legal instruments, nor should they be left merely to depend on the willingness of platforms to provide extensive clarifications in their guidelines. Looking forward, it is important to identify what mechanisms can be used to ensure that fundamental rights do not get lost in the cracks between these two normative forces. One safeguard that has been proposed, including in the Eu´s draft Digital Services Act (art. 31), is the ability for vetted researchers to access the data of very large online platforms to conduct research that contributes to the identification and understanding of systemic risks in relation to the dissemination of illegal content through their services, the intentional manipulation of their services, and any negative effects for the exercise of the fundamental rights. In the following sections, we illustrate the added value of this type of evidence-based research even within the limited framework in which we operated, prior to the introduction of such an obligation. We submit that access to platform data would bolster more robust and significant forms of accountability through experimental engagement with content moderation rules and practices.

2. Posting and requesting moderation of “borderline” content: a recipe for inconsistent treatment?

As a first empirical experiment, participants posted content that would ostensibly breach the guidelines, particularly if those were to be applied literally and mechanically. After waiting a few days to see if the post triggered any proactive platform moderation, participants also reported one another for these posts, to see if this would trigger reactive platform moderation. The process was designed to test the level of rigidity of content moderation and detect any interesting enforcement patterns across the three platforms. A total of 234 posts were made, tackling the vast majority of Tik Tok, Twitter and Instagram’s rules (95%). The general conclusion was that, while many rules as written could be interpreted as disproportionate restrictions of freedom of expression, the way in which they are interpreted often prevents an overbroad application: for example, posts that squarely fall into the scope of Instagram´ s prohibition of “comparing people to... insects” did not lead to removals, and so did the joking use of what would otherwise constitute a violent threat (“I am going to kill her”). Similarly, a high threshold of seriousness of harm seems to apply to the prohibition of “content that depicts graphic self-injury imagery” on Instagram, and to “Content that insults another individual on the basis of hygiene”, “Dangerous games, dares, or stunts that might lead to injury” and “Content that disparages another person's sexual activity” on Tiktok. Thus, the issue that came out strongest was not one of over-removal, but rather one of under-enforcement of provisions designed to address important societal concerns. However, it should be noted that a tendency to stricter application of the rules was registered for more visually identifiable categories like nudity, suicide and self-harm, minor safety and violent extremism, which accounted for 70% of the posts that got removed, when they only represent 49% of all rules of all platforms.

In some cases, under-enforcement may have been due to the inability of the moderators to understand cultural references made by the post(er). For instance, one of the participants posted on Instagram a painting of Tamerlane, a Turco-Mongol conqueror known to be one of the biggest mass murderers in human history (having murdered 17 million people, around 5% of the world's population at the time), saying that he was “a role model of a leader” and that “everything he did is to be commended and honored”. Even after several requests, nothing happened to the post, despite there being a rule literally stating that Instagram “does not allow content that praises, supports or represents events that Facebook designates as terrorist attacks, hate events, mass murders or attempted mass murders, serial murders, hate crimes and violating events”. The reason why nothing happened is probably because Tamerlane is not very well known nowadays, although he was much feared in the 14th century. The same reason may help explain why TikTok did not moderate a post that called a “cleansing” the police operation against suspected drug traffickers made in May 2021 in Jacarezinho, Rio de Janeiro, leading to the massacre of 27 people. Considering that the author of the post in question had almost 800k followers, the oversight was particularly worrying.

Yet in other instances, it seems harder to attribute the lack of moderation to cultural insensitivity. By way of example, one of the participants reposted a propaganda from Saddam Hussein's regime on Instagram, and it displayed armed mobs, military parades and a burning US and Israeli flag. Although this particular subject is well-known in our modern world, the video was not banned or flagged. What is more, the post was originally made by a meme page on Instagram with over 35 thousand followers, where the video has been viewed by well over 40 thousand people- hence, it cannot be said that the lack of moderation was due to the relative low reach of said posts.

It is also important to mention that some inconsistencies in the treatment of similar types of content were perceived not only across platforms, but also within the very same platform. On Tiktok, for example, a participant posted a video of a man smoking pot and saying that it was his favorite hobby. Since there is a rule prohibiting “content that depicts or promotes drugs, drug consumption, or encourages others to make, use, or trade drugs or other controlled substances”, it was naturally banned. However, on the same platform, another post showing a joint and a lighter inside a heart made by a pearl collar wasn’t banned. This leaves us wondering what particular factors are used to categorize content as falling within the prohibition.

3. Call me “special”: moderation of influencer content and anti-influencer content

As a second empirical experiment, participants requested moderation of content posted by influencers, defined as “persons with a large number of followers, at least 10k”. The goal of the experiment was to detect any differences between content moderation applied to influencers and moderation of content posted by “regular” users, those with a more limited number of followers. To do that, participants relied on a draft study conducted by FGV´s Center for Technology and Society regarding the dissemination of COVID-related misinformation via Twitter by Brazilian politicians. Each participant took 5 politicians from the list and for each of them followed these instructions: (1) Report any infringing post; (2) Look for other obviously violating posts in their recent feed; and (3) Search for their account on Instagram and TikTok, and in case an account was identified, repeat the procedure under (1) and (2).

In a third and final empirical experiment, participants requested moderation of speech that targeted specific influencers, or comments that were made in reaction to an influencer´s post. This part aimed to detect any differences in moderation of derogatory content or comments posted against influencers, as opposed to content posted by influencers. Participants were instructed to identify 5 to 10 posts each, and report them to the platforms as harassment, hate speech or violence against individuals.

The results obtained in these two experiments were encouraging, though obviously not statistically robust due to the limited number of observations (approximately 250). First, there appeared to be a more permissive approach to the moderation for content posted by influencers, resulting in the availability of content that would likely have been moderated if it had been posted by a “regular” user (for instance, as a violation of the COVID-19 policy). While this may be due to the newsworthiness character of influencer speech, we do not know what role the platforms give to such considerations. Again, this is an issue which has been highlighted by the recent Oversight Board decision on Trump's account suspension, requiring Facebook to ensure that it dedicates adequate resourcing and expertise to assess risks of harm from influential accounts globally. Our observations showed that this problem is not limited to the Facebook family of companies.

Second, there seemed to be a stricter and swifter application of the rules when it comes to content (or comments) posted against influencers. For instance, Instagram reviewed the reports on comments to Dan Bilzerian´s posts within 30 minutes, and the reported comments on Logan Paul's Instagram within 15 minutes. Similarly, Tiktok deleted content that was calling certain celebrities “pedophiles” and “rapists” at a speed that was unmatched by reporting of similar type done in relation to less influential users. One possible factor behind this proactive approach is that influencers may employ social media managers to cleanse their comments feed, which could explain also why we observed no negative comments in the posts of certain influencers on certain platforms: for instance, divisive celebrities such as Jair Bolsonaro, Justin Bieber and Miley Cyrus had no negative comments at all in some of their posts on Instagram, despite the large number of “haters” that each of them attracts. At the same time, it would be misleading to draw this general conclusion for all influencers: for instance, also on Instagram, a participant posted a picture of Donald Trump and called him “useless” and “worthless”. In spite of a rule saying - literally in those words - that the user can’t target a person based on “expressions about being less than adequate, including, but not limited to: worthless, useless”, nothing happened to the post, even after a specific report was made to Instagram. This may be linked to the fact that such criticism of Donald Trump may be perceived as political speech, and that “free communication of information and ideas about public and political issues between citizens, candidates and elected representatives is essential” in the words of the UN Human Rights Committee´s General Comment No. 34 on Article 19 ICCPR. Yet, we do not know if this is a result of a specific exception and what is the process for its application in practice.

4. Summing up: the bumpy road to experimental accountability for content moderation

Content moderation experiments can be a fruitful source of learning and transparency on platform governance mechanisms. This work has only scratched the tip of the iceberg, raising several questions and suppositions, a full answer to which would require those experiments to be conducted at scale. Mandated access to platform data on content moderation could substantially improve the ability of researchers to gauge those questions: by providing benchmarks and patterns across different categories of content and of users, it would enable researchers to be more targeted and surgical with their empirical inquiries. For instance, it would enable researchers to focus on the factors that are most important in triggering moderation responses, or to test the operation of different criteria in determining the consequences for a violation of a given norm.

Even in the limited framework within which it operated, however, the clinic discovered a couple of procedural flaws in the reporting mechanisms that need fixing. First, there was some discrepancy between the platform rules and the options for reporting. Twitter, for example, had the most detailed rules but many of which simply weren't present as an option for reporting: for instance, despite its extensive ‘Civic Integrity’ policy, there weren’t options on the platform to report posts infringing these rules specifically, which results in such content having to be reported as “content that is disrespectful or offensive”. The opposite was also true: some options for reporting weren’t present in the rules: for instance, fake news and misinformation weren’t explicitly contemplated in Instagram’s rules, but were included in the reporting options.

Second, there was no or insufficient follow-up with regard to reports made to the platform. For instance, in the case of Instagram, in some instances the follow-up information regarding submitted reports disappeared from the list of account notifications; and on another occasion, the platform stopped providing such information, in particular after the user had already submitted 5 reports (on different types of content). One platform (Tiktok) offered adequate follow-up features with regard to content posted, but not with regard to comments made on someone else's post, in which case the user would simply receive the standard message "We will take action if this content violates [insert the rule]".

With a view to improve the accountability of platforms´ moderation processes through experiments as those described here, participants came up with the following suggestions to platform operators:

- Disclose the range of sanctions applicable for each type of violation, and do periodic transparency reporting over the sanctions inflicted over a given reference period, including by breaking this down in different geographical contexts. Importantly, this reporting would need to be made in a sufficiently aggregate form, in order to preserve the privacy of concerned individuals.

- Better incorporate into the process local moderators, so as to allow the platform to understand cultural and linguistic references. One way to do this is to let users flag content as culturally sensitive, by adding one option to the reporting interface, allowing the selection of the designated country or region (irrespective of the user´s location), so that the post is sent to a local moderator.

- Create a “waiting room” for content that constitutes prima facie violation of the guidelines. The first screening could be fully algorithmic (matching pictures, words, comments, hashtags to key concepts of the guidelines), prompting a human moderator to review this within a short timeframe (for instance, up to 24 hours). Depending on the applicable rule, the content would be either published or “shadow-banned” (i.e., made visible only to the speaker or a limited set of users) during this interim period. Furthermore, the user could be invited to the waiting room to submit evidence or observations, to help the moderator more quickly reach a decision.

- Create a help center to follow-up on content moderation requests, in which a user who had made a request for moderation could oversee the status of the requests that concern him or her. Importantly, this information would need to be provided separate from other notifications, so as to enable users to conveniently retrieve it.

- Permit use of the platform to test content moderation as applied to different types of content, users and activities: this may be done, for instance by allowing the creation of multiple accounts per user, as well as the submission of simultaneous or coordinated requests of moderation of particular pieces of content. To that end, however, some important questions need to be addressed regarding how these initiatives would intersect with the legitimate aim to prevent people from using the platform to generate spam, false engagement, targeted attacks, artificially influencing political opinions, etc.

| More blogs on Law Blogs Maastricht |

-

Social media sanctions – the new procedural justice?

One view on social media communication is that platforms should remove content deemed to be inappropriate or disturbing and suspend users who have repeatedly violated the Community Guidelines and should do so in a consistent and coherent manner. A contrasting view is that users can share what they...

-

How the BetterHelp scandal changed our perspective on influencer responsibility

Influencer marketing and mental health. The global spend on Influencer marketing is expected to reach $15 Billions by 2022, and naturally, brands have grasped how influential social media could be in their marketing campaign. Influencer marketing has grown exponentially, leaving more traditional...

-

"Get the facts about freedom of speech and Twitter!"

In agony, many US citizens were awaiting the night of November 3rd when the first voting results for the US presidential election were expected to trickle in. Having regard to what happened since May and what was bound to happen in the weeks following the election, Twitter, presumably, was...