How our brain predicts sounds | ERC grant for Dr. Federico De Martino

Dr. Federico De Martino of the Department of Cognitive Neuroscience at the Faculty of Psychology and Neuroscience has been awarded the European Research Council (ERC) Consolidator Grant.

Knock, knock, knock

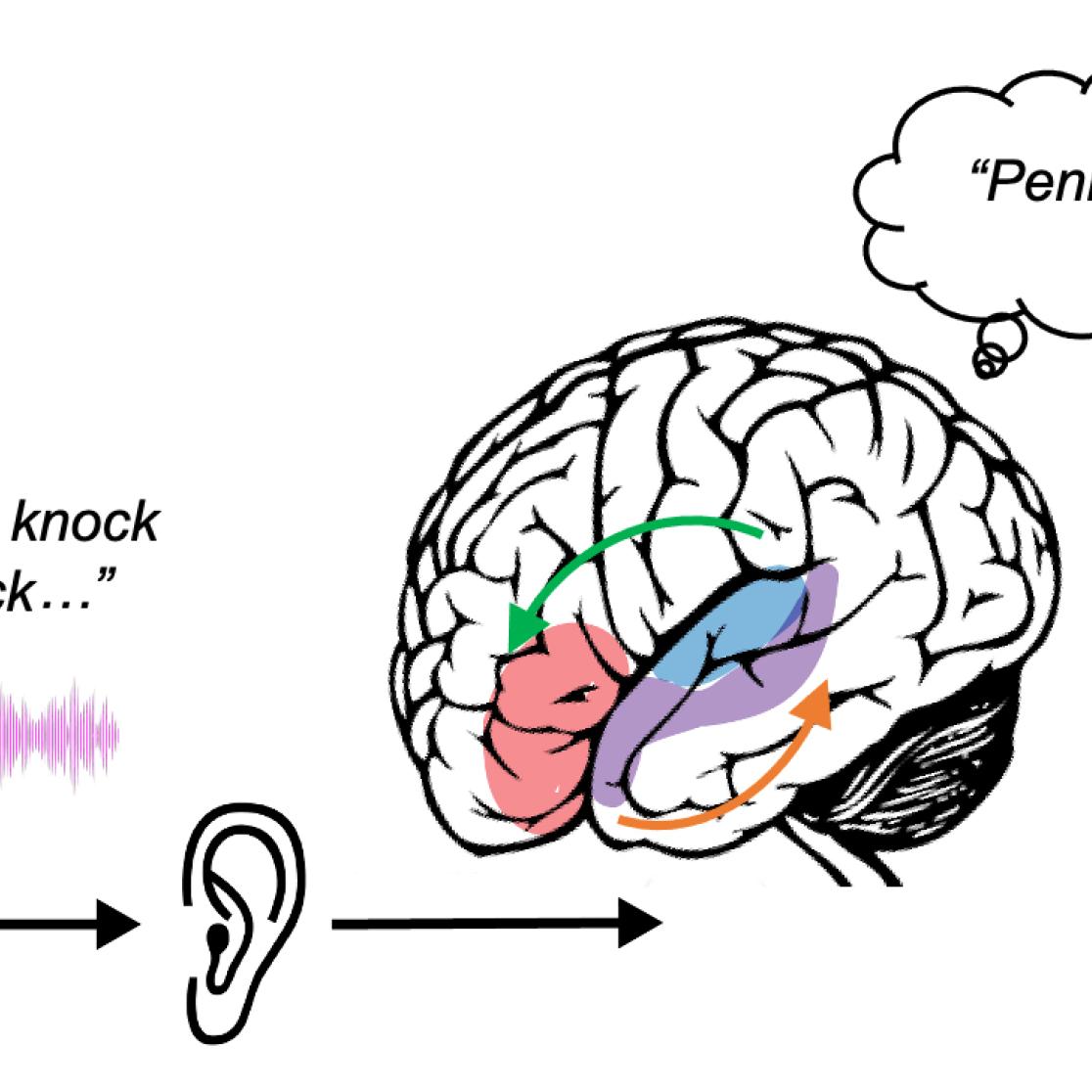

Dr. De Martino will study hearing in context, as what we hear greatly relies on context. “In the comedy series The Big Bang Theory, Sheldon always knocks three times on the door before calling Penny’s name, and this is always repeated 3 times in a row”. By watching the series, we learn this pattern, and disturbing this recurrent pattern creates several comedic moments. “When we don’t hear what we expect, we get a sense of surprise, or even discomfort. We want Sheldon to finish the pattern”. This sense of expectation of what is to come can be found throughout our senses. In movies, music and speech this phenomenon is abundant, what comes before allows us to predict what comes next. “We know that we anticipate in our hearing, but we don’t know how it works. That is what I want to find out”.

Proving or disproving the theory

For a long time, there has been a theory about how this predictive mechanism works. “It’s all about the interaction between brain regions and between our senses. These interactions are shaped by the knowledge of the world around us”. This means there is a necessity of interaction between different stages of sound processing. Your brain is constantly busy processing what you are hearing now, and what can be predicted based on what you have heard before. “I believe that this passing of information among brain regions, is going to be the foundation of the mechanism. But we’ll have wait and see, maybe we’ll find something completely new and unexpected”.

Into the unknown

At Maastricht University we have the ability to use ultra-high-field computational imaging with our fMRI scanners, to look at the smallest possible structures in the human brain. “This is really where we jump into the unknown. Will we see an architecture that confirms the theory, or will we have to modify our understanding?”.

Looking closely at the mechanism

To study the mechanisms behind our audio predictions, Dr. De Martino will combine two different methods of studying brain activity. At the Oxfordlaan 55 in Maastricht, Dr. De Martino will use our state-of-the-art functional magnetic resonance imaging (fMRI) scanners to read the brain activity when people listen to simple sounds, that are then disrupted. “We will, for example, play a simple musical melody that we will repeat a few times. Then we will drastically change the last note to create an unexpected sound”.

“You can picture the brain as a layered cake, each layer of a different flavour. Similarly, our brains have different layers with different function and connections”. The ultra-high-field computational imaging allows researchers to see each layer individually. “This way we do not just taste a mixture of flavours, but each flavour on its own”.

“We will also use is magnetoencephalography (MEG). This we will do at the Radboud University in Nijmegen with the help of Professor Floris de Lange, an expert in predictive coding”. The benefit of MEG is that it is very good in showing activity on a temporal scale. fMRI is better in showing the location of the activity. “The combination of these methods will give us the most complete picture possible”.

Fundamental understanding

The project will be divided into 2 overlapping phases. First, data collection and analysis, and second the combination of information from experiments and fMRI and MEG. “I’m a fundamental neuroscientist, so my main goal is to understand the crucial aspects of how we hear. This knowledge could then be used in more applied or clinical settings”. We use our predictions every day, it helps us for example when a sound is noisy or interrupted. “If we’re on a Zoom call with a bad connection, my speech may get interrupted or becomes noisy. However, you can, more or less, predict what I was going to say.”

Some real-world examples

Problems in combining the predictions our brain makes and what we actually hear may underlie a number of clinical conditions. For example, our brain predictions may become too strong. “When this happens, you can hear things that are not there. Because your brain is making that prediction. This may happen in people that have auditory hallucinations or tinnitus”.

People wearing hearing aids can experience difficulties when there is a lot of noise in a room. “Better understanding this predictive mechanism, may result in improvements made to the algorithms used in hearing aids and ultimately help a lot of people”.

Also read

-

ERC Consolidator grant for dr. Ryszard Auksztulewicz

Dr. Ryszard Auksztulewicz, assistant professor at the Faculty of Psychology and Neuroscience (FPN), has been awarded the ERC Consolidator grant for his research proposal entitled MemPred - Mnemonic and predictive influences on sensory processing: mapping the neural computations .

-

Teacher Information Points at UM

UM faculties now host Teacher Information Points (TIPs) that offer local, “just-in-time” and on-demand support for teaching staff. The aim is simple: to provide help that is closely connected to day-to-day teaching practice.

-

Dongning Ren awarded ERC grant for her research proposal on ostracism.

FPN colleague Dr. Dongning Ren was awarded a highly competitive ERC grant for her project: Using a principled causal approach for causal queries: the Ostracism Causal Project .